Bastion UI¶

Bastion UI is a website for supervising Genvid clusters. It is a GUI for the bastion-api.

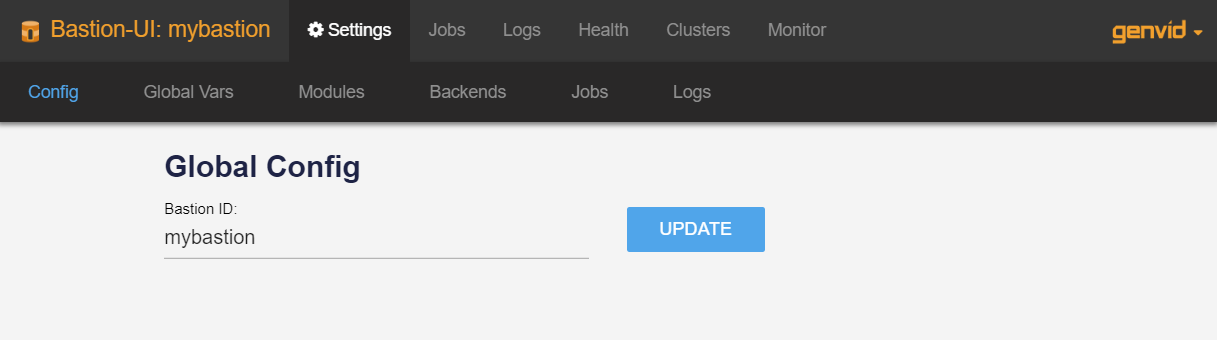

Settings page¶

This page is where you can change the Bastion-UI page configuration. You can also see and edit the GLOBAL VARS, MODULES, and BACKENDS.

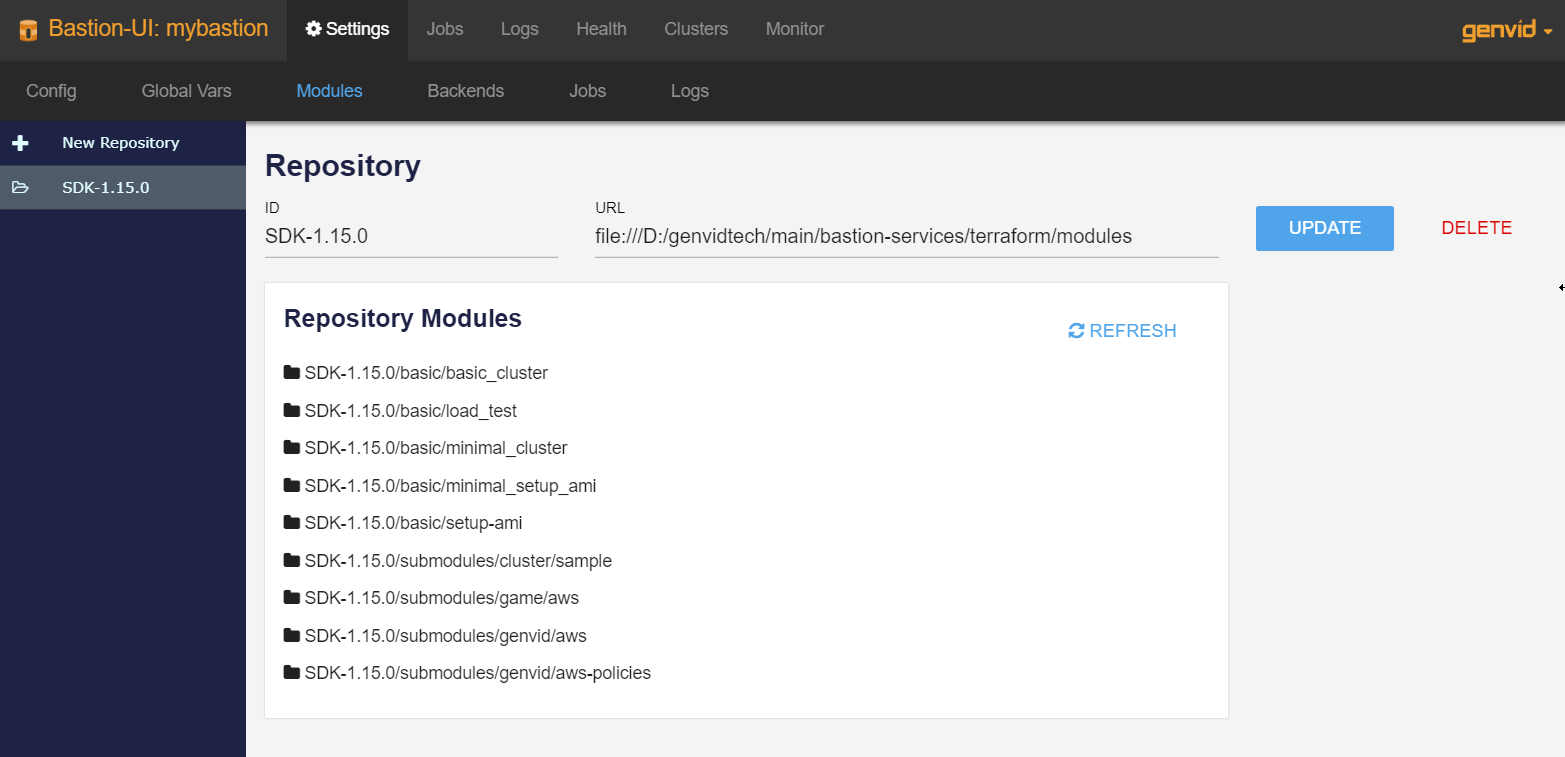

Modules Section¶

In the MODULES section, you will find the existing Terraform module repositories that you can edit or remove. Click REFRESH to update the modules in the repositories.

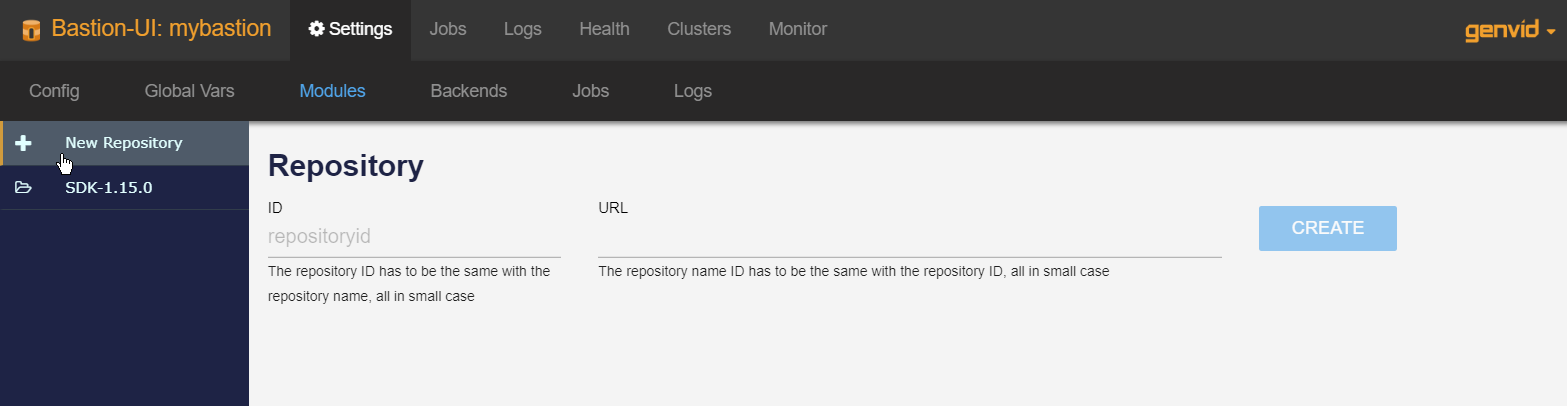

Click + New Repository to create a new repository.

- ID

- The repository folder-name in all lower-case characters.

- URL

- The URL of the folder where the repository exists.

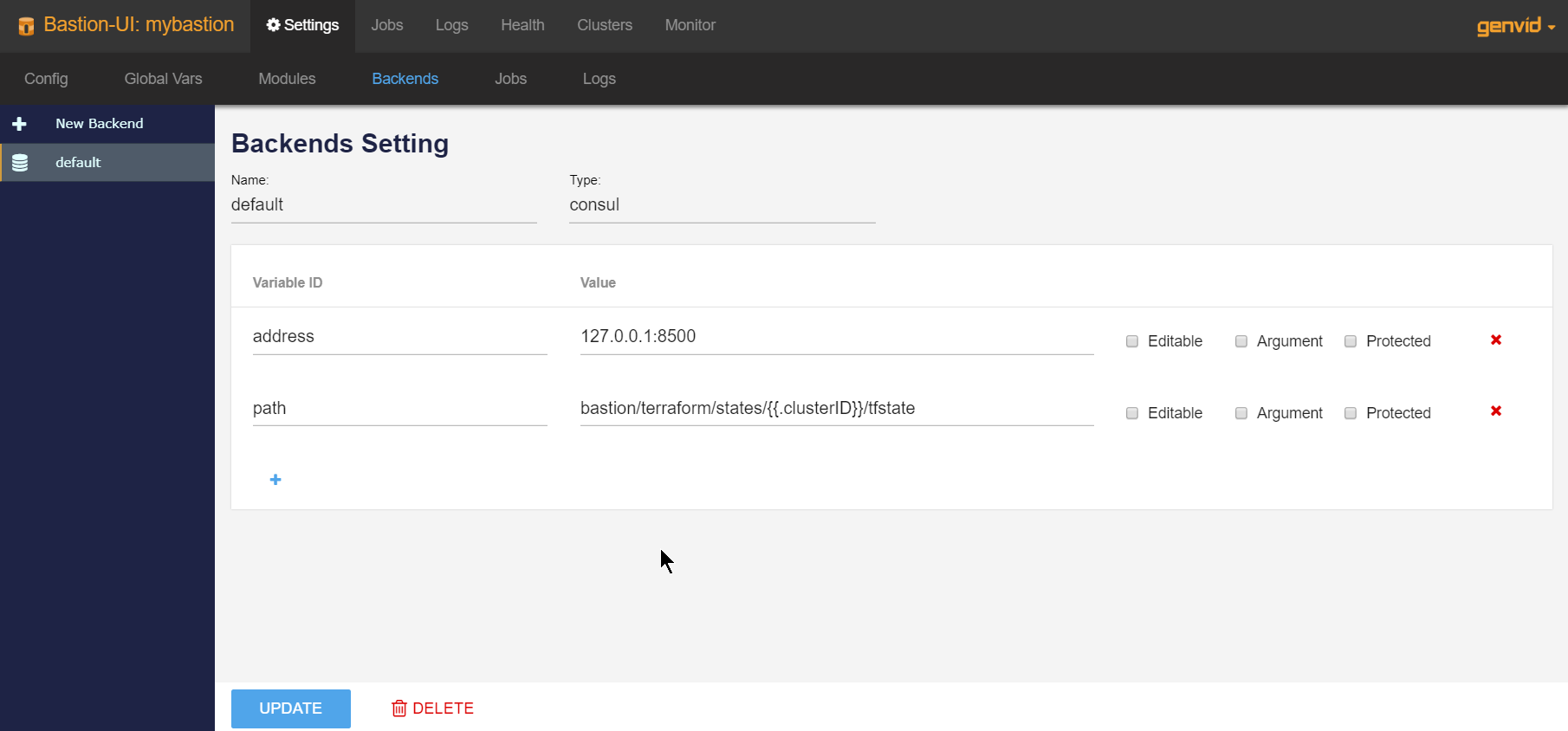

Backends Section¶

In the BACKENDS section, you can see the list of available backend configurations. A backend configuration is composed of its unique name, a type, and a list of variables. From here you can:

- Add, edit, or remove a backend configuration.

- Name

- A unique ID for the backend.

- Type

- A valid Terraform backend type.

- Variables

The arguments for the backend type, which the user can add or remove. A variable is composed of an ID, value, and a few properties.

- Variable ID: The argument name.

- Value: The argument value.

- Editable: If the argument can be edited when initializing a cluster.

- Argument: If the variable is configured by a file or passed to Terraform as an argument.

- Protected: If the variable is shown as a password when initializing a cluster.

See also

Terraform’s Backends for documentation on Terraform backends.

The bastion-api applies interpolation to the variable’s value before

initializing a cluster. For example, the text {{.clusterID}} would

become the clusterID. For now, only clusterID, bastionID, and

instanceID are supported.

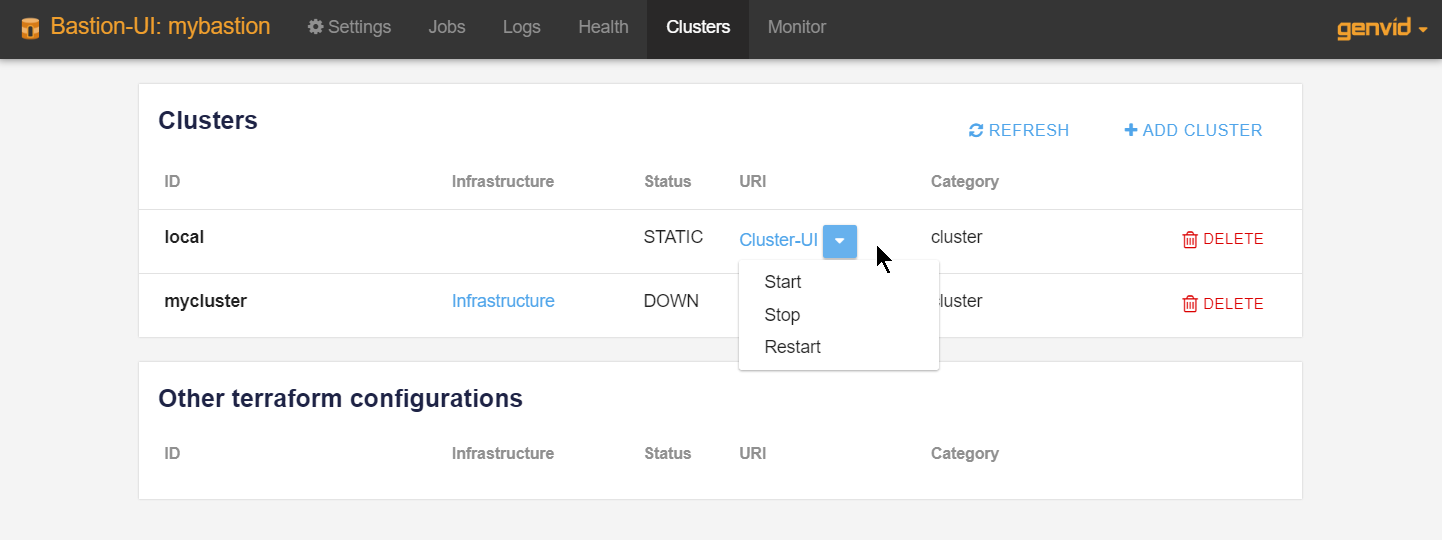

Clusters¶

This page displays all the clusters deployed. From here you can:

- Access each cluster’s specific Cluster-UI page.

- Start, stop, and restart the cluster-api.

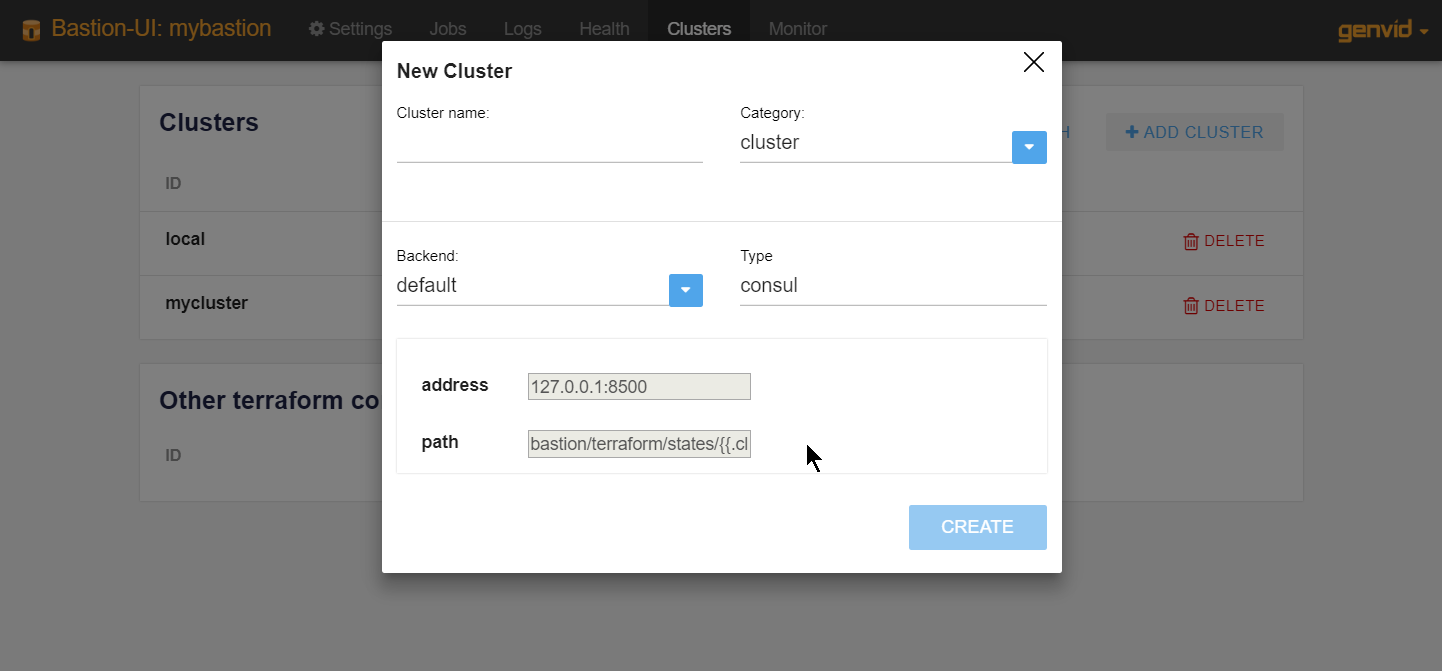

Create a cluster¶

Click Create to open the New cluster dialog.

- Cluster name

- A unique ID for the cluster.

- Category

- This helps the user identify what the cluster does. The default is cluster.

- Backend

- The Terraform backend to use. The dropdown lists all available backends.

- Type

- The type of Terraform backend being used.

- Variables

- Additional variables for configuring the backend.

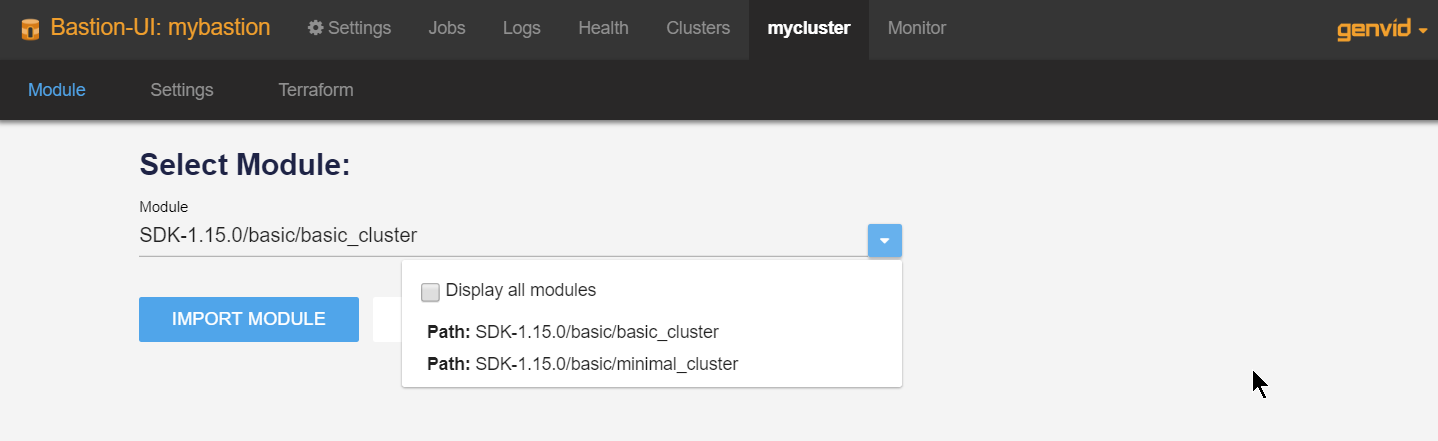

Cluster Module¶

This page is where you import a module into the Terraform configuration.

Important

This step is required.

To initialize the cluster:

- Select the appropriate module.

- Click Import module.

The list normally only displays the modules that support cluster-api. Check Display all modules to see all available modules.

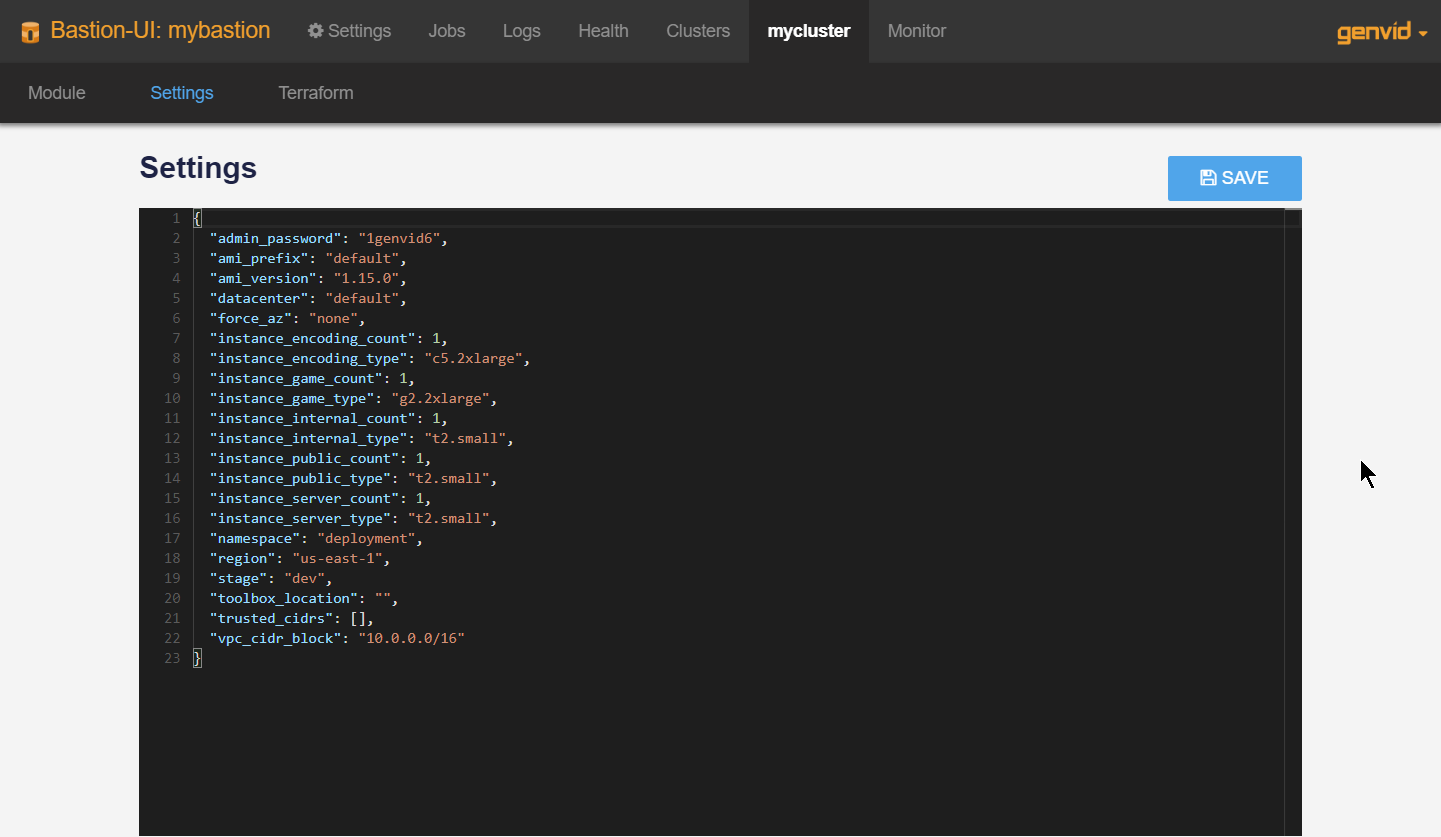

Cluster Settings¶

This page is where you configure the variables Terraform needs to build an infrastructure. The variables required depend on the module.

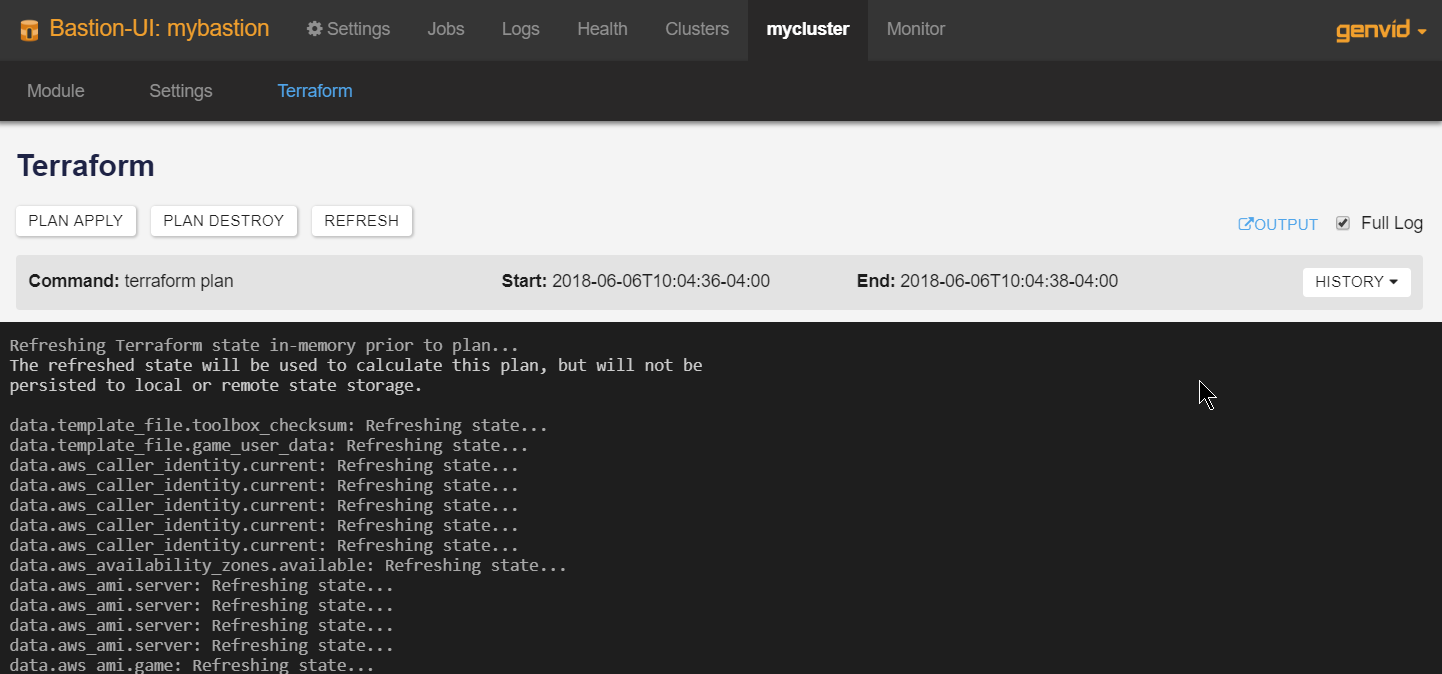

Cluster Terraform¶

This page is where you trigger Terraform commands.

- Plan Apply

- Perform terraform plan.

- Plan Destroy

- Perform terraform plan -destroy.

- Refresh

- Perform terraform refresh.

- Output

- Perform terraform output and show the result in another window.

You can terminate or kill a command while it’s running.

- Terminate

- Send the terminate signal to the running command. Terraform will first try to gracefully shut down. On the second try Terraform will immediately stop the process. This may result in data loss.

- Kill

- Kill the Terraform process immediately. This may result in data loss.

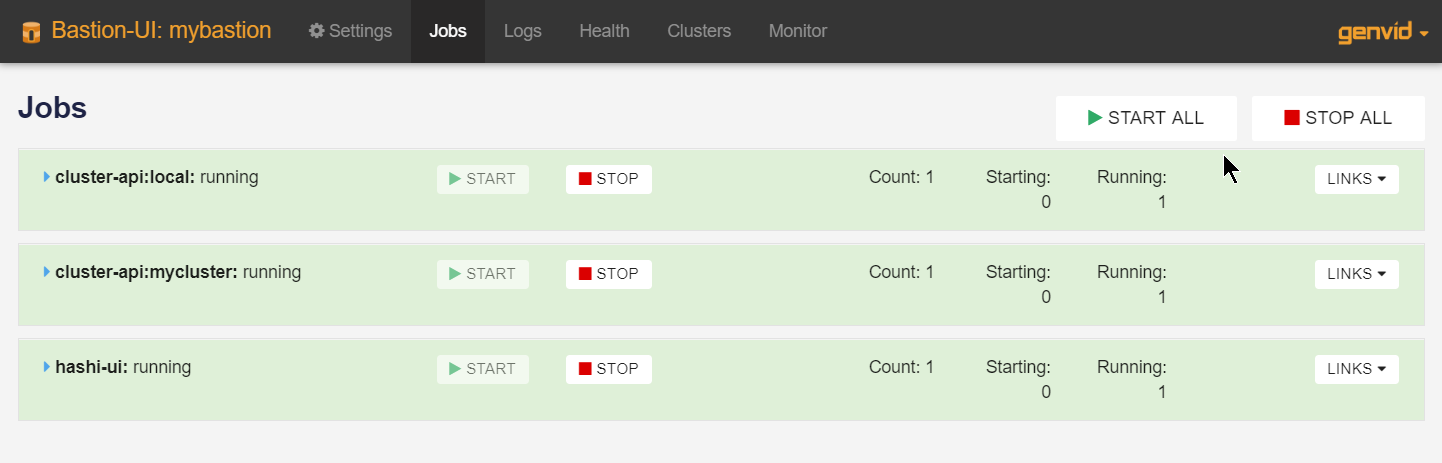

Jobs¶

This page is where you manage the jobs in the bastion cluster. From here you can:

- See the status of each job.

- Start and stop the stacks and jobs.

- Go to the corresponding hashi-ui Job page.

To edit the jobs:

- Click Settings to open the Settings page.

- Go to the to the Jobs section.

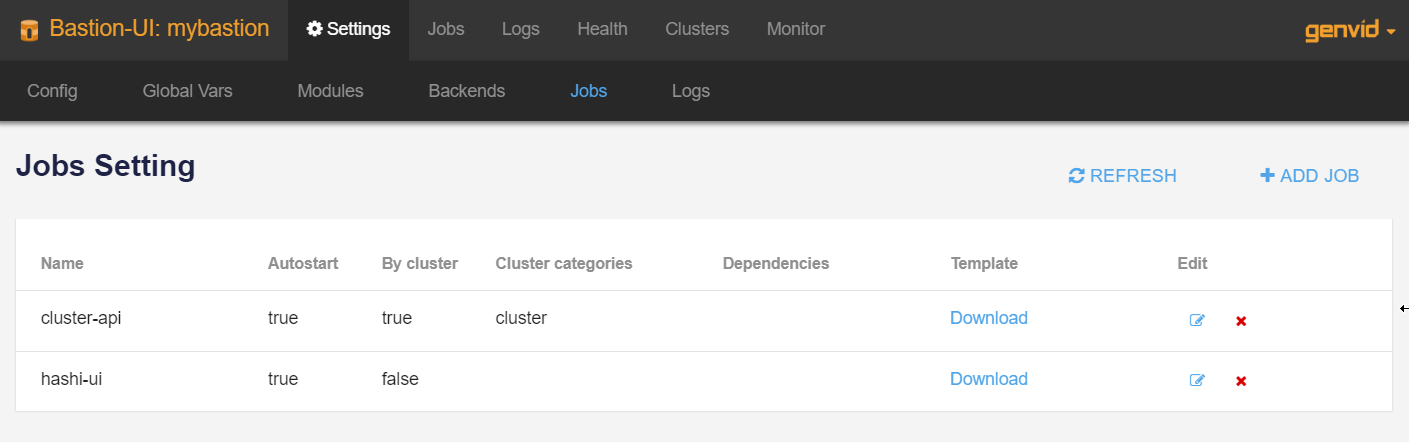

The Jobs Setting page shows the settings for all configured jobs. From here you can:

- Click Download to download a job template. The template is in text format with the

.nomad.tmplextension.- Edit or delete job configurations.

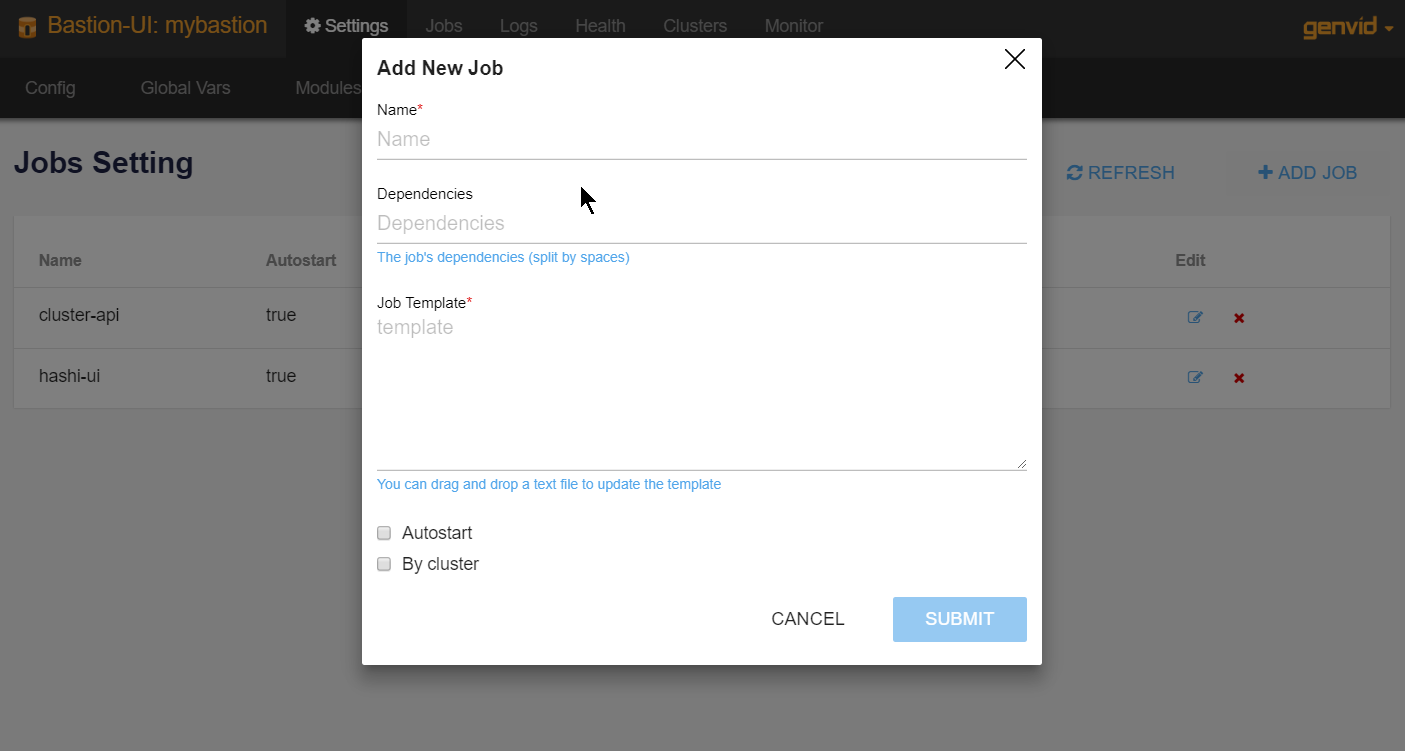

Click + ADD JOB to open the Add new job dialog.

- Name

- The name of the job should match both the name of the template file and the name of the job in the template file.

- Dependencies

- A list of services to wait on before starting the job. The default is None.

- Autostart

- Check this option if the job must automatically start on a start command without arguments.

- By cluster

- Check this option if the job must be started for each cluster individually. This adds the Cluster ID to the job name.

- Cluster categories

- Specify which cluster categories the jobs must run for. Only available if the By cluster option is checked.

- Template

- Drag and drop an ASCII file here to update the template. The job name must be the same as the template name for the scripts to work correctly. See Nomad Templates for more information.

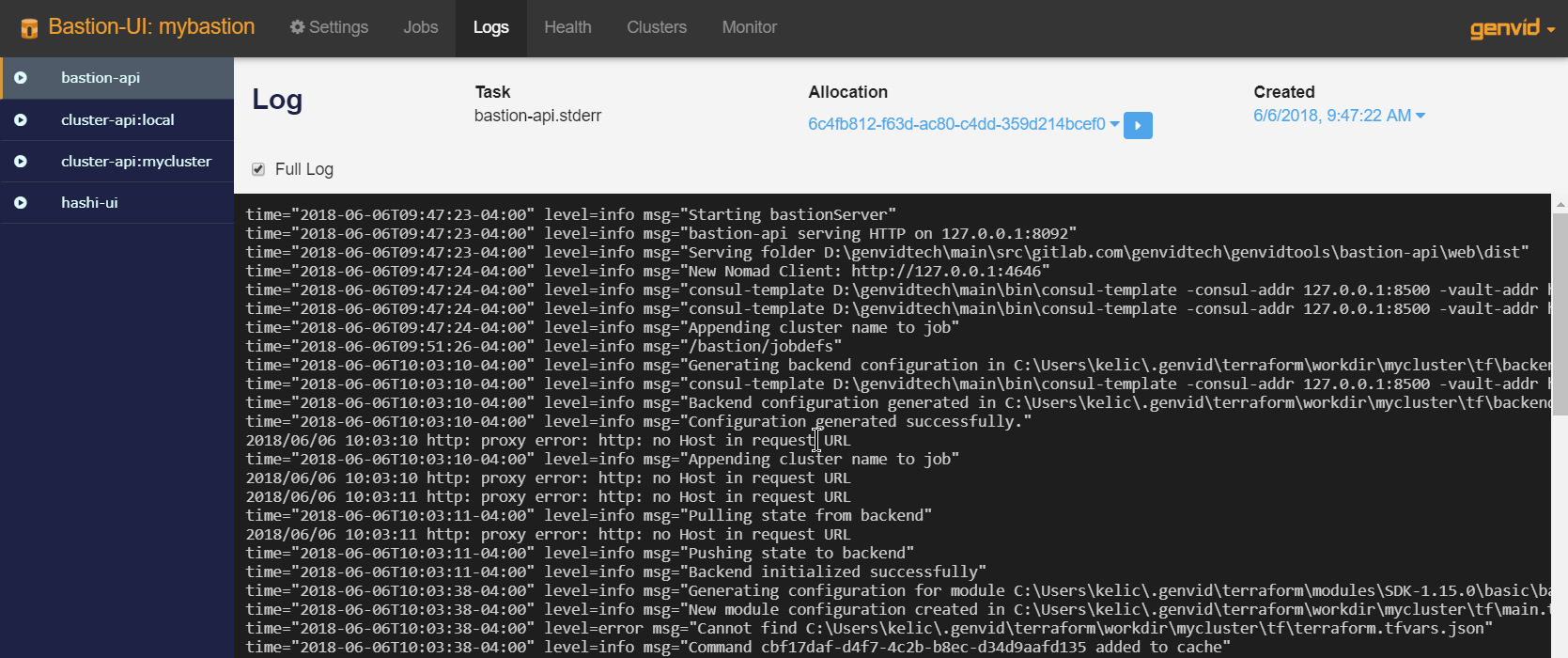

Logs¶

This page shows the available task-logs. The logs refresh automatically when the service is running. You can set the log level to either the default or per allocation level.

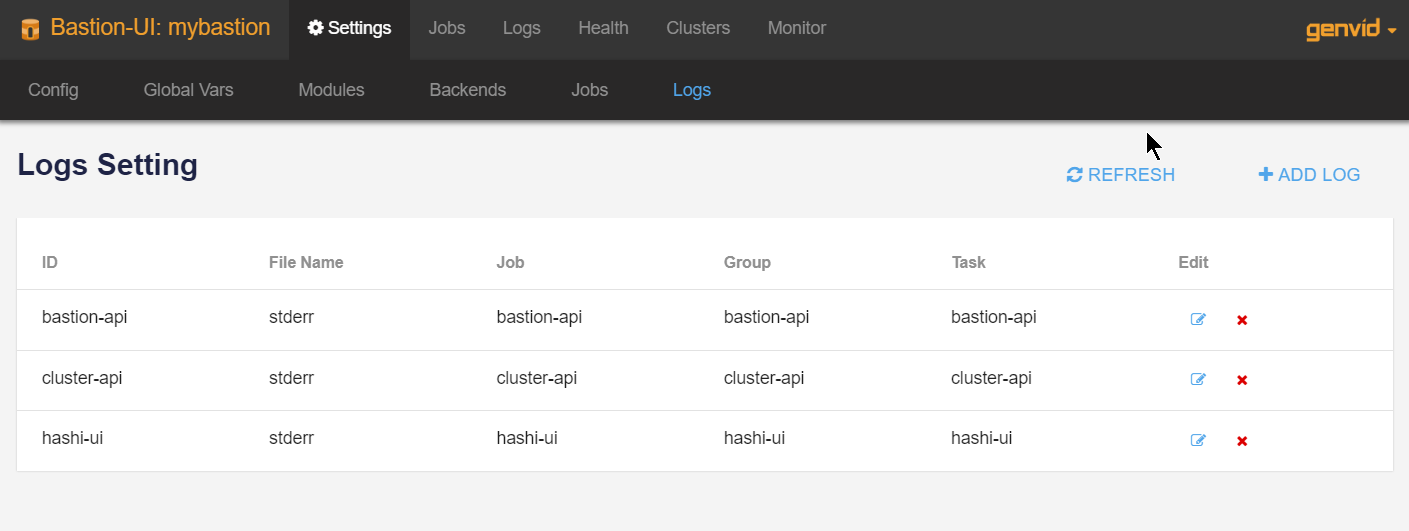

To edit the logs:

- Click Settings to open the Settings page.

- Go to the Logs section.

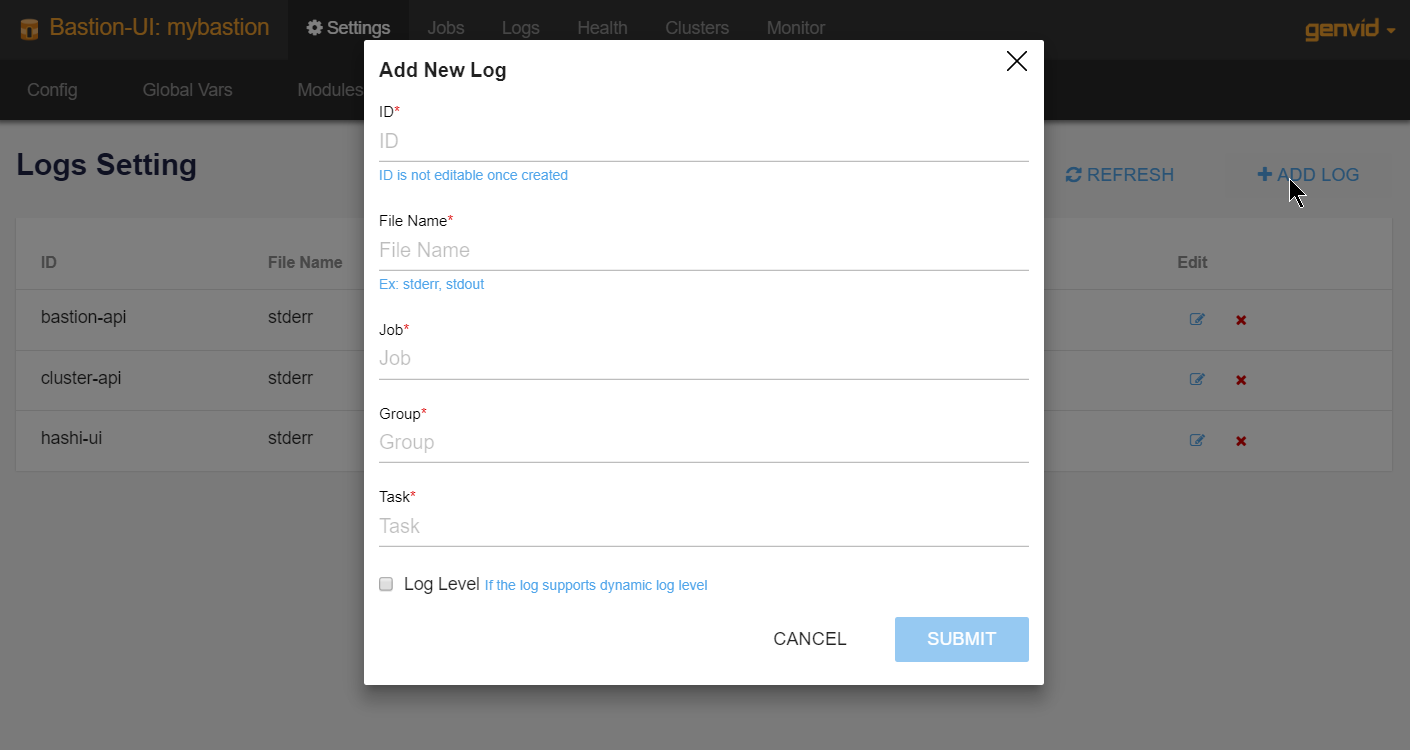

Click +Add log to open the Add New Log dialog.

- ID

- A unique ID for the log. Once the ID is created, it can’t be changed.

- File Name

- The file name for the saved log. Example: stderr, stdout.

- Job

- The ID of the job being logged. If the job is configured by cluster, the log name includes the cluster ID.

- Group

- The ID of the task group.

- Task

- The ID of the task.

- Log Level

- Check this option if the log should support dynamic log levels. Levels change the amount of information logged. The log level can be debug, info, warning, error, fatal, or panic.

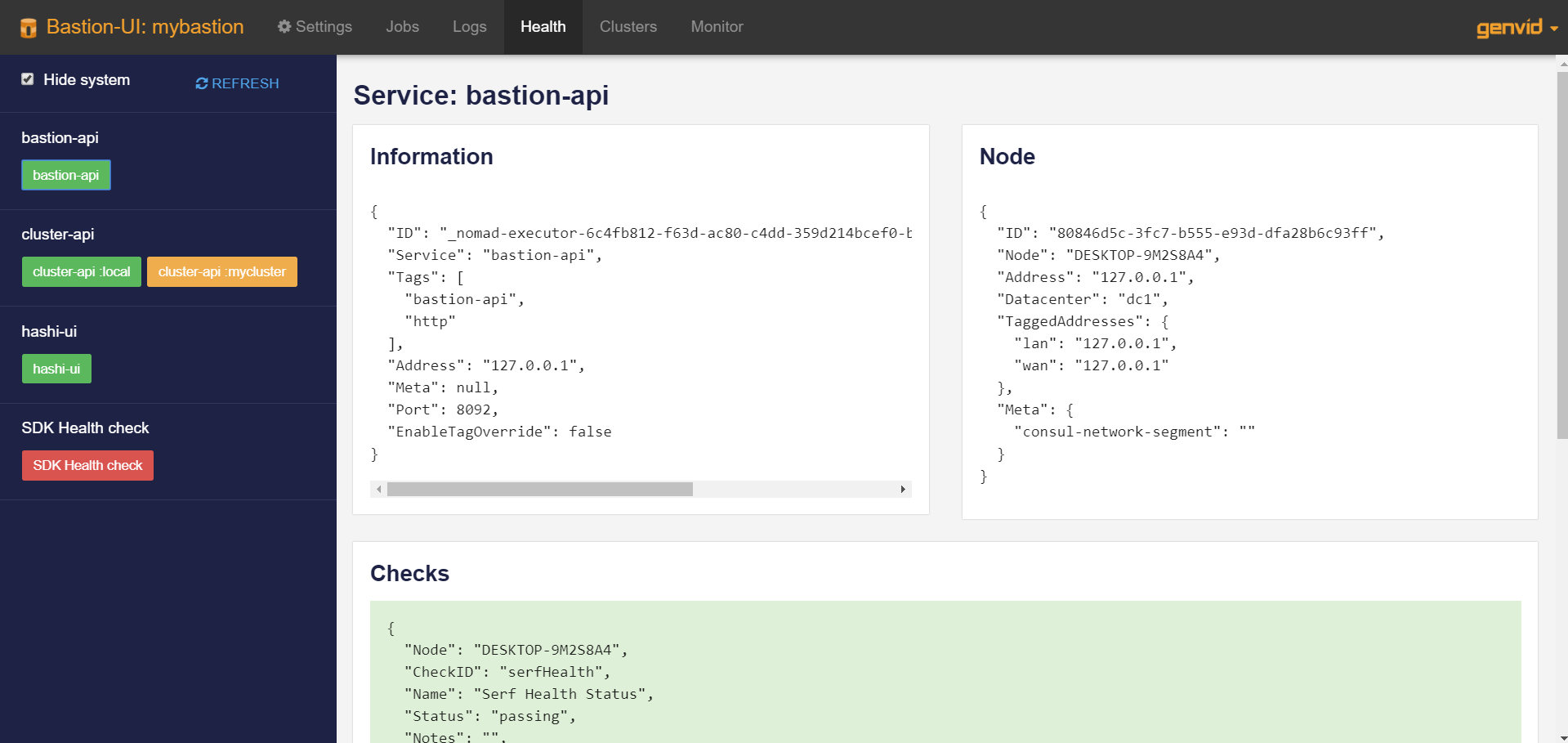

Health¶

This page displays the health of all services.

The left column shows the services and their instances. The instances have different colors according to their health.

- Green: All the health checks are passing.

- Orange: At least one health check is in a working state.

- Red: At least one health check is in a critical state.

On this page you can:

- Hide the system services to focus on the important services.

- Refresh the status.

- Click on a service instance to see its details.

Clicking a service will display its information in the detail section.

- Information

- Details about the service.

- Node

- The node the service is running on.

- Checks

- The health checks for this service.